With Microsoft’s announcement of Microsoft Fabric in public preview at Microsoft Build conference, today is one the best days for Power BI and cloud computing/analytics fans.

Not only Power BI itself is getting a whole lot more powerful with a brand-new storage mode (Direct Lake), source control, and AI assistance …. but also, a suite of other Data Integration, Data Engineering, Data Science, Data Warehousing, and more capabilities are now available side-by-side with Power BI in a Software as a Service (SaaS) mode and all work seamlessly together! Very soon CoPilot will also arrive here to assist with code, query, and report generation.

The breadth and depth of analytical engines and tooling that has been unleashed into one familiar interface in a software as a service model and a simple capacity-based pricing is unreal! A change like this does not come often in IT and analytical world.

Say Hello to Microsoft Fabric!

Microsoft Power BI /Fabric capacities can now handle workloads such as Data Integration, Data Engineering, Data Science, Data Warehouse, and much more, all in one place in a lake centric solution where all data is stored in open-source delta parquet format! No more proprietary vendor storage mode to get locked into. All of this is called Microsoft Fabric and if you already own Power BI capacities, you now own Fabric capacities and can instantly enable these capabilities from admin portal and start using these new Fabric experiences and monitor their performance from Power BI Premium Capacity Metrics app. If you don’t own a Microsoft Power BI premium capacity, you can get access to a free 60-day trial capacity in the beginning and purchase Fabric capacities starting June 1st. I have posted a video here that shows you how to enable Fabric.

If you are familiar with Power BI workspaces, that is where you will see all the new artifacts such as lakehouse, warehouse, pipelines, datasets, reports. The beauty of this integration is that since everything is in one place, you can build Power BI datasets/reports from a lakehouse or warehouse without having to go through a Synapse SQL endpoint.

Why Software as a Service (SaaS) model matters?

Previously, Azure Synapse Analytics had made great progress towards bringing in many workloads (Data Engineering, Science, Warehousing, and more) together; however, it was still offered as a Platform as a Service (PaaS) and required a significant amount of provisioning tasks and understanding of Azure role-based access rules (RBAC), networking, managed identities, service principals, dealing with interactive authoring in Synapse pipelines … and you still had to stitch it together with other Azure services to work with them. While some Azure experts could breeze through these tasks, it was still cumbersome on most admins and furthermore in some organizations, this was a very slow process due to non-technical policies. Microsoft Fabric solves all of these problems by abstracting away all these complexities and letting organizations focus on their data instead of infrastructure.

Microsoft Fabric Experiences

Here I am going to focus on Power BI, Data Factory and Synapse experiences that are available in preview as of today. Data Activator will be in preview later.

Power BI

Direct Lake storage mode: Power BI now has a 3rd storage mode which is called See-through mode, also known as direct lake. Note that is not the same as Direct Query. This much better and faster! This is the best of import and Direct Query. The Vertipaq engine, which is Power BI’s storage engine (Azure Analysis Services behind the scenes), has been scientifically enhanced so that it now works natively with delta parquet files as its very own storage. This means that the Analysis Services engine that used to work with proprietary storage format, now can read data directly from the lake which in turns means no data movement and the best performance of import mode. This feature will be in preview mode for now.

CoPilot: For those who still struggled with DAX, say hello to your best new tutor! CoPilot can help you write DAX. You can interact with it with natural language, and it will produce DAX for you! This feature will be in preview mode for now.

Power BI Desktop Developer Mode: It is finally here! Git integration (source control) for Power BI. At first, this will be limited to Azure DevOps. Github support will come later. This feature will be in preview mode for now.

Data Factory

Data Factory in Microsoft Fabric brings together the best of Power Query and Azure Data Factory/Synapse Pipelines in one place. You use Data Pipelines to orchestrate data integration tasks. You can use a Copy Activity in a Data Pipeline to copy data into a lake storage area. From there, you can use the low-code/no-code power of Dataflows Gen2 with 300+ transformations to visually integrate all your data with 100+ connectors and load the data into many destinations such as a Lakehouse, a Warehouse, and more.

Also gone are the days of worrying about those pesky Integration Run Times (IRs). I especially do not miss the Auto Resolve Integration Run Time in Synapse. These were similar to Power BI Gateways that handled data movement for Azure Data Factory and Synapse Pipelines. All of these activates are now handled by Fabric and abstracted away for good!

Synapse Data Engineering/ Warehouse / Data Science / Real Time Data

The Synapse portion by itself brings a ton of capabilities into Fabric that is hard to summarize. For those of us who worked with Azure Synapse Analytics (Gen 2) and had been counting the days till Synapse Gen 3 would show up, this is a very nice surprise! It turns out Synapse Gen 3 was never slated to come to Azure Synapse Analytics, instead, Microsoft had bigger plans and that was to bring it to Fabric. There are many improvements and additions over Azure Synapse Gen 2. Here are a few highlights:

Data Warehouse:

Synapse now provides one unified SQL Pool also known as a warehouse that brings together the best of Azure Synapse Analytics Serverless SQL pools and Dedicated SQL Pools and enables those workloads that prefer to use SQL. Think Serverless SQL Pools with full DDL support of Dedicated SQL Pools. Azure Synapse Dedicated SQL Pools stored data in a propriety data format, here everything is in Delta Parquet format, and the warehouse automatically scales. You don’t have to worry about scaling, data distributions…

With this new Warehouse, you have a lot of flexibility on how you can populate it. You can use the low-code/no-code experience of dataflows Gen2 or you can use SQL or you can use a brand-new visual query builder.

The following diagram from Microsoft shows one of the common patterns for implementing a warehouse.

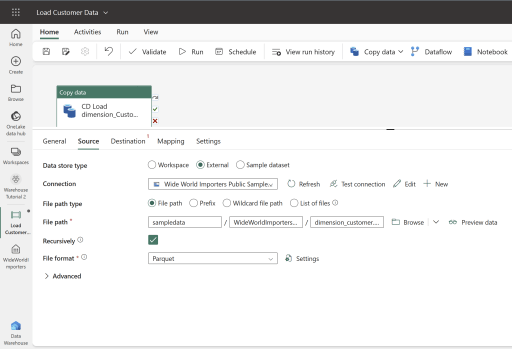

You can use pipelines and dataflows to load data into a warehouse. The following image shows a sample Copy Data activity:

The following image shows the destination options for a Copy activity in a pipeline.

Creating a new table is a breeze!

Right after the pipeline runs you can go to your warehouse and browse the data and query it with SQL.

Default Dataset: One last gem in this area is that each warehouse comes with a default Power BI dataset already built in! This dataset gets updated as new tables are added to the warehouse. Notice the Model tab at the bottom of the above screenshot. This is where you can enrich the model by adding relationships and more.

Using SQL to create/populate tables:

When creating tables, you have the option of using SQL instead of using the low-code/no-code interface of Dataflows.

COPY INTO [dbo].[dimension_city]

FROM 'https://azuresynapsestorage.blob.core.windows.net/sampledata/WideWorldImportersDW/parquet/full/dimension_city/*.parquet'

WITH (FILE_TYPE = 'PARQUET');

Using Visual Query Editor to create/populate tables:

And last but not least, you can use a brand-new experience with the Visual Query Builder that lets you enrich your data by combining tables …

Lakehouse:

You can either use the low-code/no-code power of Data Factory (Dataflow Gen2) or use Spark notebooks for a code-first approach for data transformations and land the data in a data lake (storage). From there, a lakehouse can pick up the delta files and present them as delta tables for developers to consume in Power BI. A few highlights are:

- In the lakehouse, you have access to the full power of Synapse notebooks.

- The Spark pools start much faster than Azure Synapse Analytics spark pools. Think seconds vs. minutes.

- Microsoft plans to add the capability to attach many notebooks to the same pool so you don’t have to wait for spark pools start up for each single notebook.

- Soon CoPilot will be available here as well to help you write PySpark and SparkSQL in your notebooks.

Lakehouse is the experience where data scientist will feel more at home with.

The following diagram from Microsoft shows one of the common patterns for implementing a lakehouse.

References

Learn more about Microsoft Fabric here.

2 thoughts on “Microsoft Fabric”